The world is producing an ever increasing volume, velocity, and variety of big data. Consumers and businesses are demanding up-to-the-second (or even millisecond) analytics on their fast-moving data, in addition to classic batch processing. AWS delivers many technologies for solving big data problems. But what services should you use, why, when, and how?

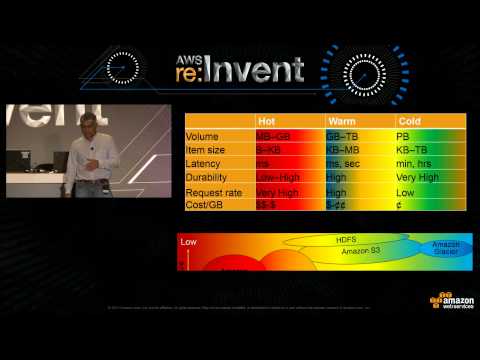

In this session, we simplify big data processing as a data bus comprising various stages: ingest, store, process, and visualize. Next, we discuss how to choose the right technology in each stage based on criteria such as data structure, query latency, cost, request rate, item size, data volume, durability, and so on. Finally, we provide reference architecture, design patterns, and best practices for assembling these technologies to solve your big data problems at the right cost.